You might have heard arguments similar to this: “I don’t have time to write tests, I’m too busy implementing this feature”. Being an automated testing fan, how would you reply? Automated tests indeed take time to write and maintain, and that is an axiom. Luckily, the reality is not as simple, and the statement above is based on assumption that automated tests are pure liabilities and do not generate any return on investment. As we are going to see, automated tests are not only bringing value in the long term, but also quite valuable even before the code is written.

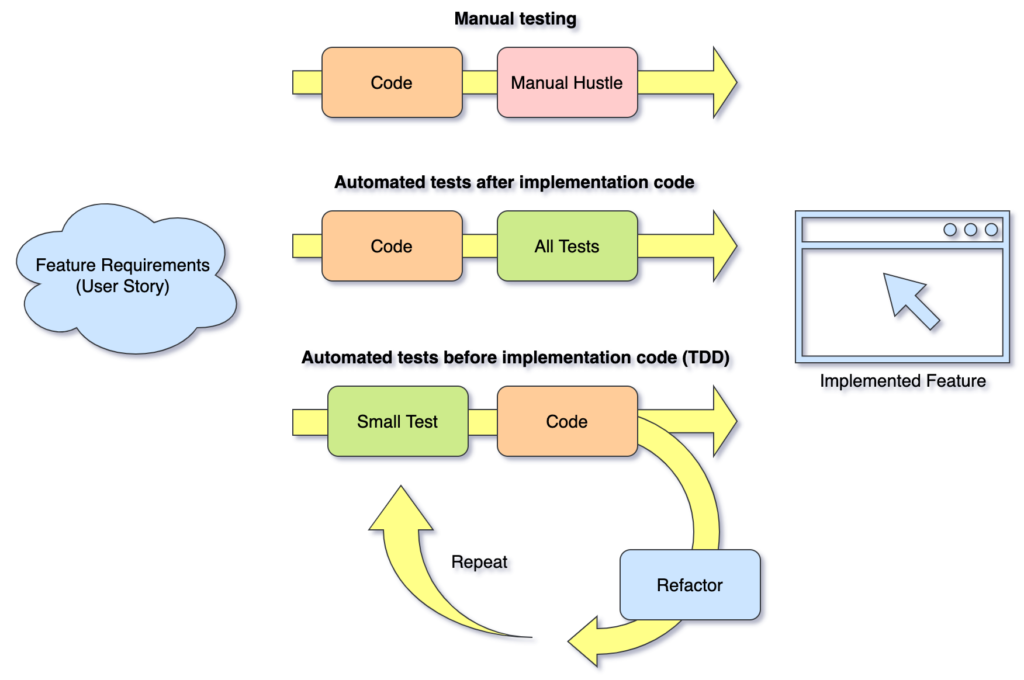

In order to do so we are going to compare three testing strategies to understand which brings best result.

- No Automated tests or manual testing

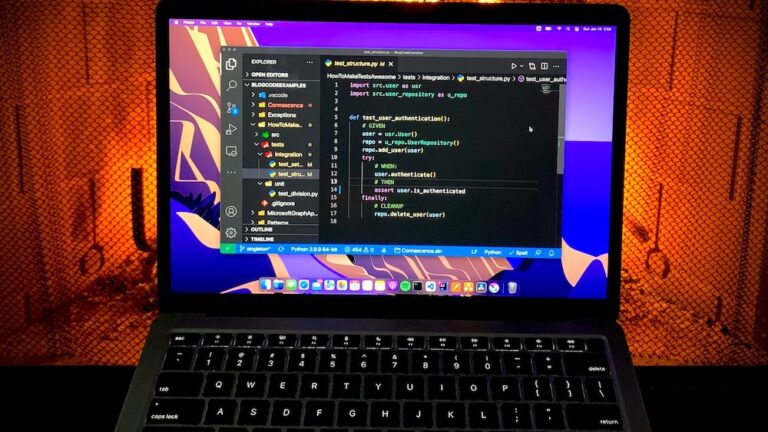

- Automated tests after code implementation

- Automated tests before implementation code or Test Driven Development (TDD)

How do we compare the above strategies? It is not possible to compare the strategies with familiar units like time, man-hour or money, because the mileage will vary depending on a specific use case. Instead, we are going to use simple point system. We are going to grant a point to a strategy that wins a category. If there is more than one winning strategy, we will grant a point to each. With that in mind, let’s dive in.

Development speed

Of course, “How fast is this going to be done?” is a concerning question of all times. One of the reasons why some people abandon an idea of automated testing is because they believe writing tests slows them down. While it might seem like a true statement, it’s not as simple and requires some context. Let’s think about three development use cases.

- Writing proof of concept code (PoC) or one-time throw-away code.

- Starting brand new project or relatively new project with little code written so far.

- Adding new feature to a mature project in production.

PoC and one-time throw-away code

While code base is small with relatively few uses cases implemented, manual testing will suffice, because there are not too many invariants to worry about. Also, the goal of Proof of Concept (PoC) code is to verify an assumption. PoC code is not meant to be run in production, therefore maintenance is not a concern and manual testing will suffice for this use case.

For one-time throw-away code manual testing strategy gets an edge and receives a point. Though it is quite rare, but if complexity of the PoC code expands, it might become justifiable to start incorporating automated testing strategies to speed up development.

Brand new project

When starting new project it might appear quite tempting to bang-bang code fast and worry about tests later, however it usually is not a good idea. Yes, feature development may take little longer in the beginning, but soon enough automated tests will catch up and begin giving your development time back. The more features you develop, the more time you receive back that would have been spent on manual testing and bug fixes otherwise. Since the project was designed and developed with automated tests from the ground up, it is very easy to keep following the strategy and continue ripping benefits.

Because of the above, for projects that are here to stay both automated testing strategies win a point.

Mature project

We may consider projects mature when they have been running in production for considerably long time. Some may be actively developed, the other may receive less frequent updates.

Mature actively developed projects

Projects that are actively developed and use automated tests will benefit from continuing to follow the automated tests path. Projects that may not have had automated tests before will also benefit from introduction of automated tests later, however it is harder to start creating automated tests for code that was never designed with tests in mind.

Automated testing strategies get an edge in the context of mature actively developed projects, therefore both receive a point.

Mature projects on maintenance

Projects that are in maintenance mode may receive updates, though quite infrequently.The updated are usually security patches, bug fixes and rarely new features. If code has automated tests implemented, then it makes sense to continue following the strategy. However, if tests are not present developer may decide not to introduce automated tests because it will most likely require significant refactoring of the code that works but rarely needs to be changed.

For this type of projects where return on investment from automated tests is insignificant or even negative, manual testing strategy may receive a point.

Number of bugs in production

Humans are not good at executing repetitive tasks that require continuous focus. We get tired pretty quickly and therefore likelihood of an error increases, same as possibility of a bug slipping into production. For that reason, we delegate repetitive tasks to computers that are very good at executing repetitive workloads. Well, that is exactly what automated testing is about, offloading repetitive execution of test cases to computers.

Obviously, a point goes to both automated testing strategies.

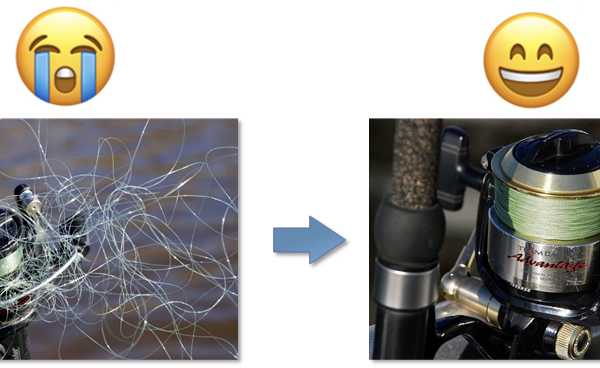

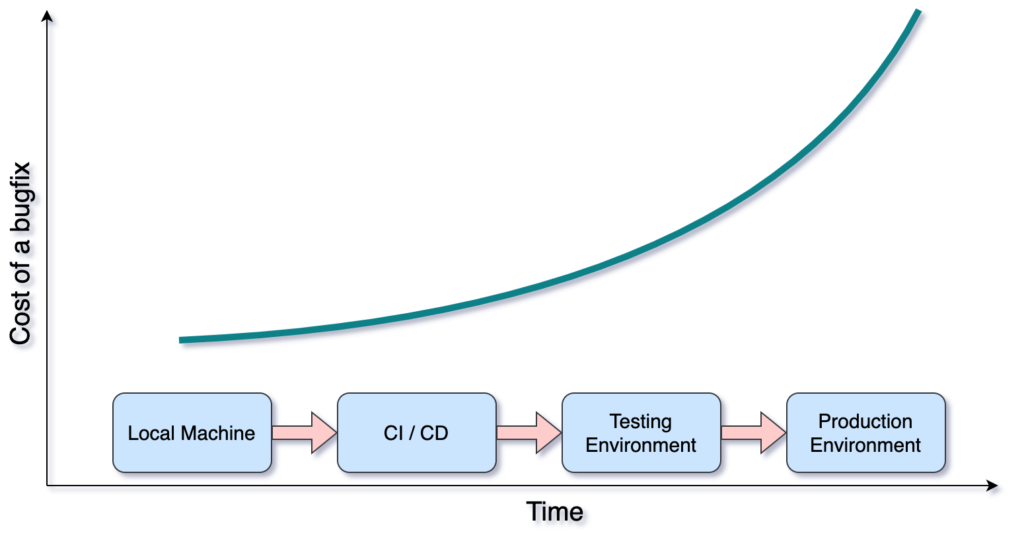

Cost of fixing a bug

The further in time a bug is discovered, the more effort it takes to fix it. It happens because over time we lose the context of a problem we worked on, therefore our ability to fix bugs within the context diminishes. As an example, it is much easier to fix a bug while still developing code on a local machine, rather than trying to figure out a problem with code that was deployed a month ago. Consider development process shown below. The further we move in time, the costlier it becomes to fix a bug.

With automated tests we increase the probability of discovering bugs early in the development process, because tests are written along with the code itself. Therefore it is no doubt a point goes to both testing strategies.

Debugging Free

With manual testing we tend to debug a lot, we put break points throughout the code to ensure expected values are returned, correct execution path was taken, proper error was raised and handled. The manual cycle of executing the code, looking at an IDE’s variables watch window, checking execution path goes on and on.

With automated tests we use assert to automatically check variable values, code path, error handling and etc., therefore the need for debugging dissipates. Of course, we may want to throw in few break points to debug a failing test, however we don’t have to do it for every other test case that passes. It is a huge time and effort saver. Because automated tests reduce the need for debugging we grant a point to both automated testing strategies.

Reduction of unnecessary code

Test Driven Development (TDD) conceptualizes tests before code approach. If followed thoughtfully we can get an interesting side effect which results in less code written to support a feature. It is well known that because of our forward thinking we tend to write little more code than necessary for a specific feature implementation. We strive to be future proof, have more generic and reusable code where it may not be necessary at the moment and might never be needed. Following TDD we first create a test that verifies specific feature implementation, then we write code to make the test pass, but no more than that, then we refactor if needed and repeat. With this approach we end up with cleaner code because of the refactoring step, and we have created just enough code to implement the feature.

Because of this interesting property, TDD strategy receives a point.

Interface design

When designing new API (service, library, application public classes or functions) it is extremely helpful to have at least one client of the API. Client in this context means code that uses our new API for a meaningful purpose. Through API’s client we can verify design assumptions and look at the API from consumer’s perspective, we can validate how easy it is to use the API, whether the API provides sufficient functionality and so on.

With absence of real client automated tests may fulfill the role. Writing test will allow a developer to switch hats and view the API from different perspective. TDD goes even further. Since we write a test before feature implementation, we have an opportunity to exercise the API before we even create it. If we follow the other approach and write full implementation code first, later upon writing tests we may discover issues with the API and will have to spend time on refactoring the API and rewriting implementation code.

Though both automated testing strategies are great to validate APIs, TDD takes it to another level, therefore it receives a point.

Long term maintenance

For long term maintenance it is important to have documentation that new developers joining a team can use to learn about application features and behavior. Tests serve as documentation that is as close to code as it could possibly be, that never gets outdated and automatically validates implementation code. If a test starts failing due to new feature implementation, a developer will have to update the test to incorporate new requirements or fix code that broke the test.

Because of such important property both automated testing strategies deserve a point.

It is fair to mention that automated tests require maintenance effort, however the effort is completely overweighted by the amount of time and resources that manual testing and production bug fixing take.

Enjoyment

Manual testing is a repetitive process that requires full concentration, however it lacks creativity and joy from problem solving. Therefore manual testing rapidly drains mental reserves that could have been better spent on problem solving and more creative tasks. I personally haven’t met a developer who is looking forward to spend few hours manually clicking through an application to prove their code changes did not break any existing features. There is no enjoyment in manual testing process, however joy from work is a huge factor that keeps us excited, motivated and productive.

Because of lack of joy in manual testing, a point goes to both automated testing strategies that help us stay focused on creative tasks.

Conclusion

We walked though different categories and this is how the score board looks like.

| No Automated tests or manual testing | Automated tests after code implementation | Automated tests before implementation code or TDD |

|---|---|---|

| 2 | 7 | 9 |

TDD appears to bring the most return on investment when comparing to other approaches, closely followed by “automated tests after code” strategy. But how does it help to answer the question from the title “Automated testing slows me down, doesn’t it?” ? In every category where automated testing strategies win they help to reduce manual work, lower probability of expensive to fix bugs, eliminate the need to rewrite code multiple times, hence increase development speed by eliminating unproductive and wasteful activities. Yes, writing automated tests may slow down development in the beginning, but soon the positive effect becomes large enough to compensate for the effort spent on tests, and this effect keeps compounding over time.

However, it is important to realize that the value automated testing brings highly depends on the quality of tests. Obviously, the higher quality of a testing suite, the more value it brings.

If you feel I was unfair to manual testing and failed to make honest comparison, please share your thoughts. I will be happy to learn from you and incorporate your feedback in this post.

More on how to make your automated tests awesome here.

As always, happy coding!

Bonus

As a bonus, below is a comparison table of the three testing strategies.

| Category | No Automated tests or manual testing | Automated tests after code implementation | Test Driven Development (TDD) |

|---|---|---|---|

| PoC and one-time throw-away code | 👍 | ||

| Brand new project | 👍 | 👍 | |

| Mature actively developed project | 👍 | 👍 | |

| Mature projects on maintenance | 👍 | ||

| Number of bugs in production | (Higher probability of bugs) | 👍 (Less bugs) | 👍 (Less bugs) |

| Cost of fixing a bug | 👍 | 👍 | |

| Debugging Free | 👍 | 👍 | |

| Reduction of unnecessary code | 👍 | ||

| Interface design | 👍 | ||

| Long term maintenance | 👍 | 👍 | |

| Enjoyment | 👍 | 👍 |